Every organization experiences technical difficulties now and then; that’s just a fact of technology. But there is always a delicious irony when it happens to transhumanists, those starry-eyed prognosticators of unfathomable technical power and absolute technical mastery.

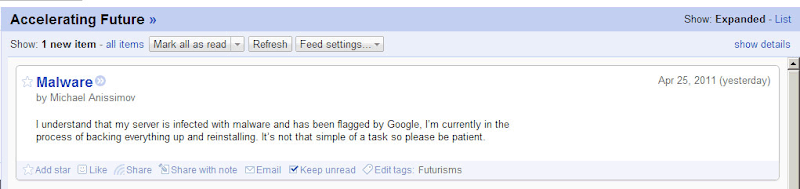

This week’s serving of ironic technical failure comes from one of our most reliable sources of easy material, Michael Anissimov. On Monday this ignominious post appeared on the RSS feed for his blog, Accelerating Future:

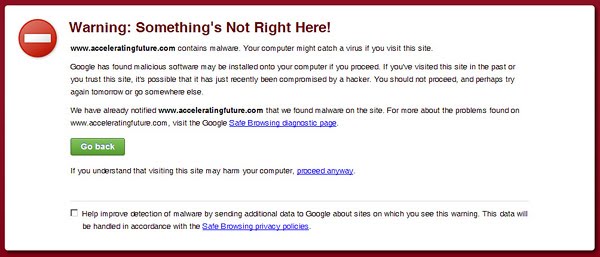

You can click the screenshot to enlarge it, but in case you can’t read it, it says “I understand that my server is infected with malware and has been flagged by Google, I’m currently in the process of backing everything up and reinstalling. It’s not that simple of a task so please be patient.” Rough times. I’d link to his site, but evidently the malware remains and it’s not safe to visit; if you go in Google Chrome, you’ll see this:

What would the technical term for that be — an infestation of unfriendly un-AI?

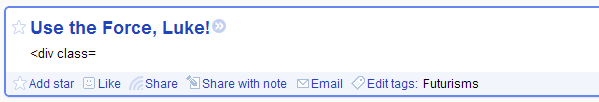

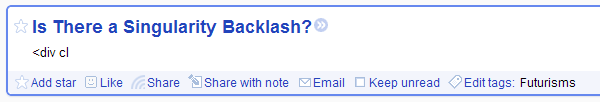

This is not an isolated incident, of course. Until recently, the RSS feed for H+ Magazine had some pretty impressive screw-ups on a regular basis. I happen to have taken some screenshots of my favorites (these are all real, and there are many more like these):

And probably the best (this one, I believe, is from the site itself):

(Sic on Phil B[r]owermaster.) One might wonder about the wisdom of entrusting Humanity+ to people who can’t seem to figure out HTML.

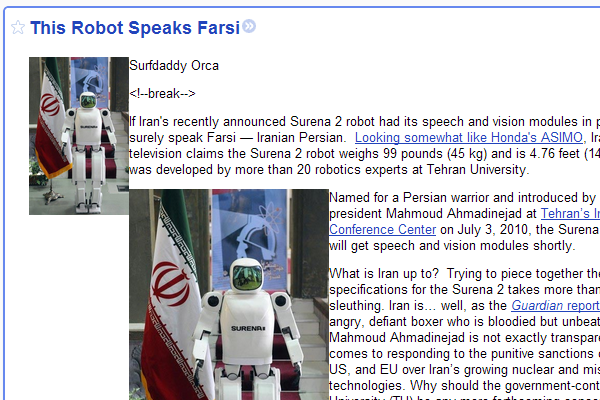

One last example. In doing a wrapup post on the first day of the H+ Summit last summer, I noted:

The talks on the first day were plagued by various technical problems, particularly on Apple computers, that delayed the presentations. The organizers joke this off by noting that at least it’s not as bad as Steve Jobs’s recent embarrassment with Apple products not working at an Apple conference. Yeah, except Steve Jobs is only suggesting that we purchase his computers, not that we literally live in them.

I wanted to dig up some video clips to actually show you what I was talking about, but when I went to the streaming video feed for the conference and clicked “More Videos” to see if there was some sort of archive, this — no joke — is what I found:

[UPDATE: See the follow-up post here.]

Futurisms

April 26, 2011

In my writings, I always stress that technology fails, and that there are great risks ahead as a result of that. Only transhumanism calls attention to the riskiest technologies whose failure could even mean our extinction.

Isn't Michael Anissimov noted for warning about things that can go wrong with AI? This isn't ironic; it's an illustration of his worries.

"Only transhumanism" calls attention to failures? Saaaay what?

Out here in the real world, engineers call attention to possible failure quite often. Transhumanists calling attention to possible failure are simply part of that pattern.

I'm making a fuss over this partly because of a common reaction to the recent nuclear problems in Japan ("They said this accident was impossible"). This is passed from activist to activist without coming in contact with reality. There's a certain lack of examples of anyone actually saying such an accident is impossible.